"MCP Sucks" (Until It Doesn't)

The “MCP is dead” discourse peaked in earlier this year. Eric Holmes’ post hit #1 on Hacker News. Garry Tan called it “sucks”. Perplexity’s CTO dropped it internally, saying it was “too expensive for production.”

Then, two months later, Garry Tan changed his mind:

The MCP critics correctly identified the problem (bloated, auto-converted MCP servers) but in my opinion they incorrectly blamed the protocol! I’ve shipped an MCP server, a CLI, and 30+ agent skills. Here’s when each approach actually wins.

CLI Wins When You Have a Terminal

On pure performance, CLI + Skills beats MCP on every measured dimension for developers and agents with terminal access.

I use gh CLI for everything GitHub-related, not the GitHub MCP server. Claude Code defaults to gh too.

# CLI: 1 tool call, shell filters the result, ~1,400 tokens

gh issue list --limit 5 --json title,state | jq '.[] | .title'

# MCP: model loads 93 tool schemas (~55K tokens), picks one,

# gets the full response in context, then reasons over itScaleKit benchmarked this across 75 runs using Claude Sonnet 4. CLI used 4–32x fewer tokens per operation. CLI hit 100% success rate; MCP hit 72%. At 10,000 operations per month, CLI costs ~$3.20 vs MCP’s ~$55.20.

The pipe architecture is why. Intermediate JSON never enters the context window. The shell does the filtering, not the model. jq, grep, awk, head are context-window-efficient filters with no MCP equivalent.

The context window tax compounds. GitHub’s MCP server exposed 93 tools at the time of that benchmark, roughly 55,000 tokens of schemas before the first message. (They’ve since cut the defaults to 52, but it’s still a lot.) Stack a few MCP servers and you can lose 72% of a 200K context window to schemas alone.

Every major coding agent (Claude Code, Codex, Cursor, Aider, OpenHands) uses bash as its foundation because models trained on billions of bash examples. Zero MCP schemas in training data. Even Anthropic conceded: their code execution approach reduces 150K tokens down to 2K, a 98.7% reduction.

The Context Window Complaint Is Outdated

A lot of the "MCP eats my context window" complaints haven't caught up with the fixes. Claude Code now defers MCP tool schemas by default and loads them on demand. Cursor does the same, reporting a 46.9% reduction in total agent tokens. Anthropic's API, OpenAI, and Cloudflare have shipped similar approaches. If you're using Claude Code or Cursor, you already have tool search. If you're building on the raw API, you need to opt in (defer_loading: true per tool). The ScaleKit benchmarks above don't mention tool search and tested against GitHub's hosted MCP server directly, so they likely reflect the pre-tool-search experience. The gap is narrowing fast for coding agents with tool search enabled, but the full context tax still hits API builders and Claude Desktop users who haven't opted in.

CLI has a cost most people don’t talk about, though: you’re giving the agent a shell, and the security surface is the entire operating system.

But Why Not Just APIs?

MCP’s auth IS OAuth 2.1, the same standard REST uses. OpenAPI has described APIs for machines since 2015. You can auto-generate MCP servers from OpenAPI specs. Every agent can call REST APIs with requests or curl. No special client libraries needed. MCP adds JSON-RPC, session management, and SSE complexity on top of plain HTTP.

All true. So if MCP generation from OpenAPI is mechanical, why not let each client auto-generate what they need? Because auto-generated servers are what got us into the 93-tool, 55K-token mess. Curation requires human judgment about what an agent needs vs. what an API exposes.

Several SaaS companies with excellent REST APIs (Linear, Stripe, Slack, Figma, GitHub, Notion, Sentry) built hand-curated MCP servers anyway. REST can do the job technically. What it doesn’t give you: frictionless distribution, non-developer access, and centralized control over what each agent can see.

With a REST API, every consumer gets the same endpoints. With an MCP server, you can scope tools per token. An on-call bot gets get_dag_runs and get_task_logs. A CI agent gets trigger_dag_run. An analyst gets read-only tools. Same server, different tool sets based on who’s connecting.

REST APIs also carry versioning overhead: v1/v2, deprecation cycles, migration guides, client library updates. MCP tools describe themselves at runtime. The model reads the schema dynamically, so the server can evolve without the same breaking-change coordination.

What Neither Solves

“Just use CLI + an API backend with auth and logging.” Sure, you could. But now you also need per-token tool scoping and a schema agents can discover. You’ve just rebuilt MCP, except yours doesn’t plug into Claude Desktop, Cursor, or ChatGPT without custom integration.

Distribution

CLI means downloading a binary, installing dependencies, managing PATH, dealing with platform differences, and handling version skew. REST is better but each consumer still writes their own integration code: auth, endpoint mapping, response parsing. Agent Skills have the same problem. Skill paths vary across skill.sh, Claude Code, and Cursor, and the MCP community is exploring using MCP itself as the distribution channel for skills. (More on Skills & its distribution in a separate post soon.)

MCP: URL + token. Done. The server updates once and every MCP-compatible client (Claude Desktop, Cursor, ChatGPT, Codex, and hundreds more) gets the new version instantly. No binary downloads, no deps, no path resolution, no upgrades on the consumer side. The integration math shifts from M×N (every consumer × every API) to M+N (one server + any number of clients). Anthropic’s MCP Connector takes this further: API-to-API, no client code at all.

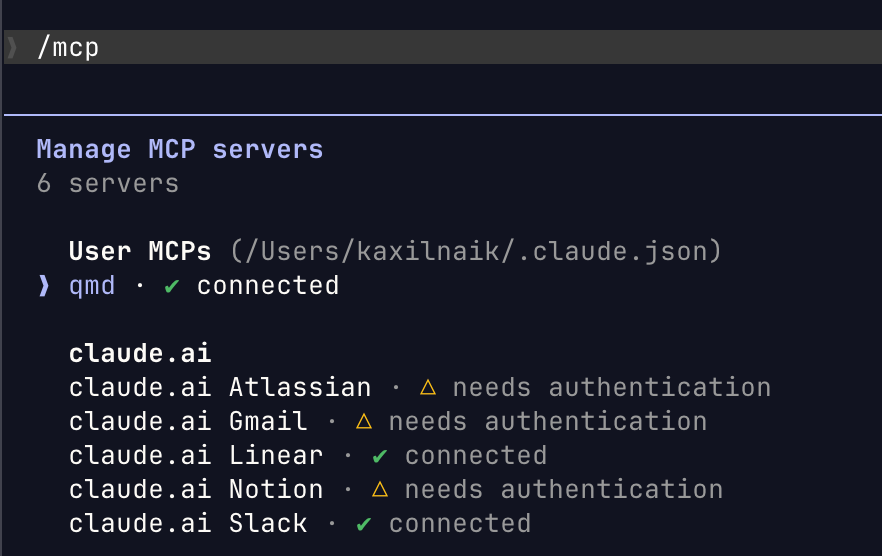

It’s also convenient from Claude Code, Cursor etc to know when a MCP server is down or needs authentication.

MCP has a rough edge here, though: try connecting to two Slack workspaces or two Snowflake accounts from the same client. Both server instances expose identically-named tools (send_message, query), and most clients (Claude Code, Cursor) route calls to the wrong instance or suppress one entirely. The spec says clients should prefix tool names to disambiguate, but nobody does it well yet. CLI tools handle multi-instance naturally.

Non-Terminal Consumers and Non-Developers

Some consumers don’t have a terminal at all. A large marketplace company runs an on-call bot that queries their data platform for incident triage. Read-only, no human in the loop, no terminal. It needs HTTP transport with authentication. Claude Desktop users want to check their pipeline runs without installing a CLI. Mobile apps and internal AI products need server-side access. Server-side MCP doesn’t care about machine paths or local config. It works the same whether the consumer is a desktop app, a mobile client, or another service.

Then there are the people who interact with data platforms but aren’t developers. An analyst checking if a pipeline ran, a PM tracking data freshness, an SRE who touches the system once a month and doesn’t want to install a CLI and learn its flags. Server-side MCP gives them access through tools they already use (Claude Desktop, ChatGPT, internal chatbots) without asking them to open a terminal.

Skills need a local environment. They reference files on disk, run scripts relative to a working directory, and assume a terminal. Not an option for a Slack bot, a mobile app, or a non-technical user.

Access Control and Security

Any system with coarse role models has this problem: the API’s permissions don’t match what you’d give an agent. You end up with roles that are either too restrictive (can read but can’t trigger operations) or too permissive (can trigger operations but also read secrets and credentials).

CLI-based agents add another dimension to this. You’re giving the agent a shell, and the security surface is the entire operating system. When Anthropic’s Claude Code source leaked via npm, security researchers at Adversa found that deny rules silently stopped working when a shell command contained more than 50 subcommands. A developer who configured “never run rm“ would see it blocked normally, but 50 no-op commands before the dangerous one bypassed the check entirely. Anthropic patched it, but the broader pattern is that CLI permission models are hard to get right because the set of possible commands is unbounded.

MCP servers have their own security risks. Tool responses are untrusted text that the model interprets, and a compromised server can embed instructions that hijack the agent’s behavior (prompt injection). Neither approach is inherently safe. The difference is the attack surface: CLI agents can do anything the shell allows; MCP servers expose a fixed set of operations.

The fix for the role-model problem is a filtering proxy: expose the safe operational endpoints, hide the ones where secrets live. For enterprises, this is where MCP earns its keep. The security boundary lives on your infrastructure instead of on every developer’s laptop. Scoped tokens per team or role. Audit logs showing which agent called which tool and when. You can update access policies without pushing changes to every client. Enterprises already have API gateways (Kong, Apigee) for REST, and those work too. MCP’s advantage is combining the gateway function with distribution and non-terminal consumer access in one layer, instead of running three separate systems.

Which One When

Justin Poehnelt calls it the abstraction tax: every layer between the agent’s intent and the API loses fidelity. The question is whether the accessibility gain justifies the loss. His framing: “MCP and CLIs aren’t competing. They’re different answers to the same question: how much fidelity are you willing to trade for accessibility?”

For simple API surfaces, 5–8 curated tools cover the main use cases without severe fidelity loss. For complex APIs with hundreds of endpoints, the tax is much steeper and CLI or direct REST makes more sense.

MCP the protocol is fine. MCP servers built by auto-converting 80 REST endpoints into 80 MCP tools are what poison the context window and confuse the model. Garry Tan landed on this too: “light and purpose-built and engineered.”

Here’s how I think about it:

CLI + Skills for developers and agents with terminal access. 4–32x fewer tokens, pipe architecture, bash is already in the model weights.

Server-side MCP for everyone who doesn’t have a terminal or doesn’t want one: non-developers (analysts, PMs, SREs), non-terminal agents and bots, mobile apps, and enterprises that need a central control plane with scoped tokens and audit logs.

These are layers, not competitors. Skills teach agents how to think about a domain. CLI and server-side MCP are two ways to give agents tool access, optimized for different consumers.

Aaron Ott put it well: MCP is the transport, skills are the instructions. The future is probably both flowing through the same pipe.

Build for both. CLI + Skills where you have terminal access, server-side MCP where you don’t. And whatever you do, don’t auto-convert your entire API into MCP. Curate ruthlessly.

Next up: why Skills are the most powerful layer in this stack, and how to build them without the distribution headaches. Subscribe if you want the details.